Perhaps you’ve seen them outside London’s Natural History Museum last summer, or else in a Stockholm park, or in any number of places around the world — a travelling exhibit with stunning aerial photographs by Yann Arthus Bertrand. They’ve just now also shown up in Google Earth’s Global Awareness base layer — over 400 of them. Here’s the layer’s official site, and here is the blog announcement.

These photos provide hours’ worth of ogling pleasure. Until now, my main frustration with them was wondering where they were taken. No more. My new frustration with them is that they are georeferenced but not — we need to neologize here — geopositioned, by which I mean placed as overlays in Google Earth with the tilt and roll and zoom and field of view set just so, so that we can see exactly where the shot was taken from.

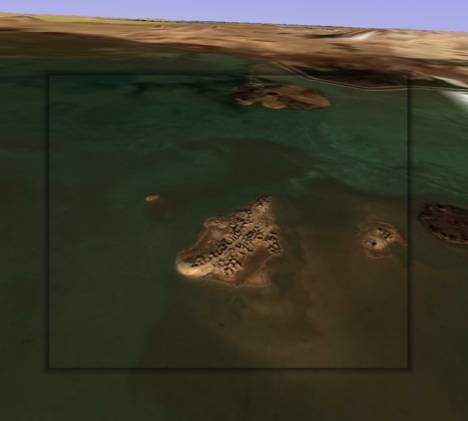

To show you what I mean, I took a photo of islands in a lake in Egypt’s Siwa oasis and positioned it in Google Earth so that when you fly into the picture, it “exactly” matches the view in Google Earth. Here is the KMZ file.

I confess it took me a long time to fidget with the controls to get that image to fit, but I was trying to make a point:-) Use the opacity slider to check the accuracy.

Geopositioning all photos is not really a scalable proposal — unless we all did one each, of course. Also, not all images lend themselves to this treatment — some are too close, others are of inconstant patterns such as drifting sandbanks.

Voila, yet another way Google Earth will quash your productivity — unless you can call it “research” for a blog post:-)

[Update 17:10 UTC: It just struck me that it would be very cool if there were to be a button in Google Earth that let you “paint” that photo from that perspective on top of the base layer, taking into account the height mesh (DEM) data and buildings, so that you could subsequently fly over it. I have no idea whether that would be a trivial feature to implement, but it is definitely where research projects are headed, and it would make for a great way to turn 2D images into 3D images. Google’s PhotoOverlay controls are obviously sufficient to accurately geoposition such a shot. Scalability remains the main problem, here, I think.]

It shouldn’t be that hard to implement, but it won’t be perfect — anything that isn’t flat will get the wrong perspective, much as buildings seem wrong and only look right when viewing from the original perspective. As long as people don’t mind, it’s a good feature to think about.

BTW, one of the original ideas with photo overlays was exactly to allow perspective images to be warped such that they can lay “flat” on the terrain (this is very similar to the normal orthorectification process) to enable a more collectively-defined (and more detailed) earth texture.

What would also be relatively straightforward is a button that says “fix the position” of a photo overlay, given an image and a user’s rough guess of it’s position. Once the pixels are close enough to compare, an algorithm would do a better job aligning the 3D rendered and 2D photo for a perfect match. Finding the position without such close correspondence though would be a much harder problem.

Avi, this is where I think Street View is a superior source — but in mapping from ‘internal perspective’ to texture building faces. I still don’t think I’d take an ‘overlay’ approach at this time, because you still run into a brick wall with scalability for use in other applications.

The fundamental problem in my mind is scalability. On one hand — sure, you’re taking varying ‘modular elements’ as content [ie: bare polygons, photographs, text data, etc] and establishing a greater content or contextual entity. Thus, attempting to preserve the uniqueness of each elemental resource. But, the problem with this approach may be that you would have difficulty in realistically taking the generated entity out as its own ‘product’, at least within this concept.

If though, you are able to ‘export’ the entity as a unique, wherein all modular elements that went into the creation of said entity — you would then be looking at a rendering strategy to establish one use-able component [ie: a texturized model]. This would then conceivably be the path to scalability in this concept of a system.

I think it would be a somewhat lofty ambition at this time — though not an impossible one.

The painting of photos idea might be limited in practice to photos taken with a long focal length.

I was also very disappointed that they’re not geopositioned. Nice alignment though in your KMZ.

I’ve been experimenting with such geopositioned photos for a few years (some old video is at http://www.snapmap.com). The scope for beautiful animations and UIs is enormous. I am sure we will indeed soon see geo-addicts adding this data to other people’s photos. Of course “all photos ever taken” won’t get this data (no, not even with PhotoSynth!), but millions of notable photos will.

For positioning the photo in Google Earth, how about adapting of the old overlay control? If adjustments are restricted to isosceles trapezoids (as seen in Microsoft bird’s-eye view) then we can determine camera position and orientation for full-frame shots. Or one can imagine matching up points on photo and imagery to adjust the trapezoid indirectly (thought non-flat landscapes make that a bit hairy), which might (if I could deal with the maths) also work for non-full-frame shots.

Hi !

Have you seen my KMZ : http://www.nicolasfaure.fr ?

This is the base of the official Arthus-Bertrand layer I have done for Google and Arthus-Bertand this summer. I have started this work more than 2 years ago. Now, almost 800 photos are localized, half of them are overlays. Take a look :o)

Nicolas

Beautiful!

I have also been wondering about this thig that google earth and maps don‘t match. Besides, that is beautiful! I love the sand island, it seems so cool.